Caching as a part Software Architecture: 5 min read

How to boost the software performance by caching

In previous post we talked about how to scale your app and improve its responsiveness from a very high level. We slightly touched caching on the application level, but did not talk about Web caching. Here I want bring our focus briefly to both Application level caching and Web caching.

Caching is a technique that stores a copy of a given resource and serves it back whenever requested. It enables the reuse of previously fetched resources thus reducing latency and network traffic. Hence it increases the responsiveness & performance of applications.

A developer can define the service’s caching strategy on the application level as well as on the http level.

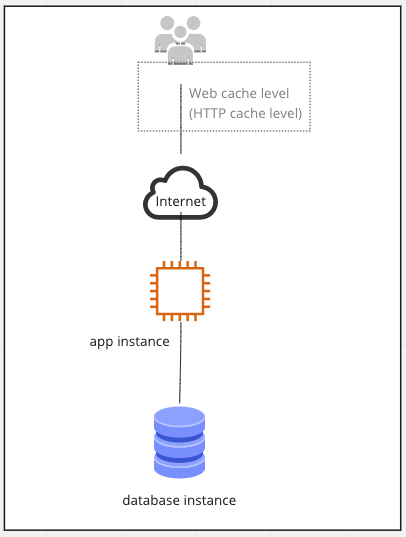

Web caching (or HTTP caching)

Web caching can be either shared or private. While a shared cache stores resources for reuse by more than one user, a private cache is designed for a single user. We will explore more about private cache as it fits to the most of use cases when developing mobile or web applications.

Private web caching, also known as web browser caching, stores responses for GET requests in the client’s browser and eventually returns that response back whenever the client conducts the same GET request.

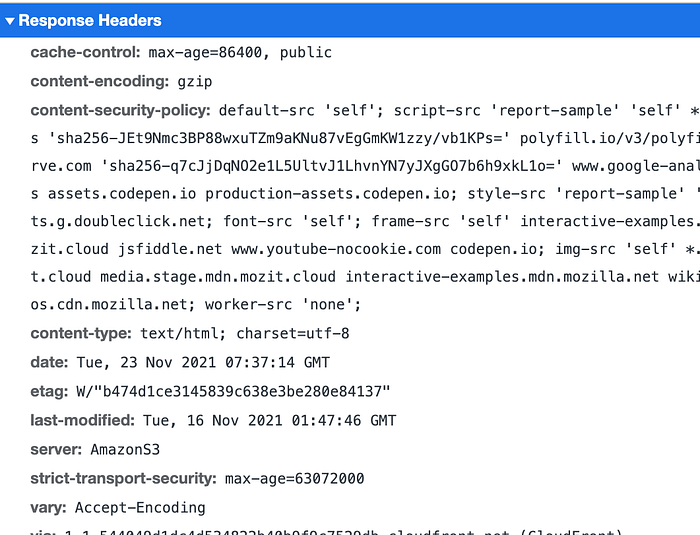

As a developer, you can control what and for how long can be cached in the client’s browser whenever GET request hits your service thanks to HTTP caching directives (i.e. HTTP headers). Here a few examples of those directives:

- cache-control specifies max-age of a copied resource and whether the cache is a public or private (i.e. can be used by shared cache or intended for a single user only). However, you may decide to set it to no-store (i.e. no caching allowed) or no-cache (requires to check the server for validation before returning the cache)

- etag specifies a version of resource, thus the cache is able to check whether the content was changed or not. Etags also help to deal with simultaneous updates of a resource, but it is another topic.

Here is an example of response headers from MDN Web response, where you can find previously mentioned http directives like cache-control and etag.

As a result this is how our app design looks on high level when we consider using Web Caching.

You can have a detailed look into varying caching strategies at MDN Web docs. They provide a lot of information about the topic along with nice illustrations.

Application caching

Imagine you have an API which provides information on upcoming local holidays and events. 90% of your web requests are read operations, which is getting events for specific period of time from the database, transforming them into a viewable state, and providing it back to the user. Users can’t remember the details of the events and thus they tend to return back to your application to fetch the same data from time to time. Moreover, as certain events come closer to the current data, more and more users request very similar data.

It will be very cost efficient to store frequently fetched data in the app memory or lightweight data storage for the future requests instead doing expensive queries in the databases and then transforming that data to a viewable state over and over again. That would also improve speed & responsiveness of the application. This is where you need the application level caching.

As a developer you can achieve the application level caching by storing frequently requested data in the app memory or, preferably, in a separate server with the dedicated caching engines like memcached or Redis.

Here is an example of the code snippet from the digitalocean.com for the node.js application wherememcachedMiddleware is used to check client’s requests against the configured cache storage (which is Memcachedin this case, but it could be Redis too).

const Memcached = require('memcached');let memcached = new Memcached("127.0.0.1:11211")

// configuring the memcachedMiddleware which is going

// to be used between our server and client requestslet memcachedMiddleware = (duration) => {

return (req,res,next) => {

let key = "__express__" + req.originalUrl || req.url;

memcached.get(key, function(err,data){

if (data) {

res.send(data);

return;

} else {

res.sendResponse = res.send;

res.send = (body) => {

memcached.set(key, body, (duration*60), function(err){

//

});

res.sendResponse(body);

}

next();

}

});

}

};// Finally use the middleware, which ensure that

// no request is served for this endpoint before

// is checked against our cache storageapp.get("/products", memcachedMiddleware(20), function(req, res) {

[...]

});

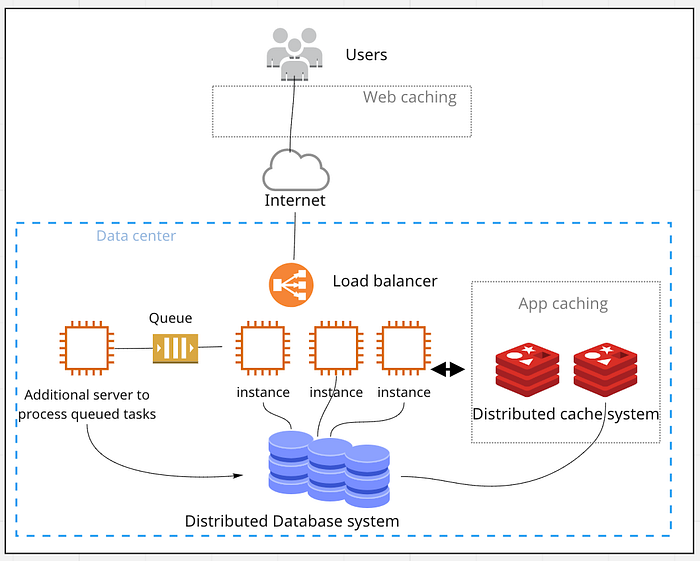

In the end this is how our high level app design looks like:

If you want to get a detailed step by step tutorial on this topic, then I recommend you to read the whole article “How to Optimize Node Requests with Simple Caching Strategies” by Chris Nwamba.

Summary notes

Combined both web & application caching are significant tools to increase performance and responsiveness of an app instance. These techniques are essential tools in modern distributed systems. We can upgrade our design from previous article “How to Scale Your Applications: 5 min read” to have a clear view of the core message in this article:

Further readings: